Imagine this: Your CEO just asked how much productivity your team gained after rolling out AI coding assistants. You pause. You could say "25% more code is being written" — but does that actually mean anything?

If you've been tasked with justifying your AI investments with metrics like lines of code or token usage, you're not alone. But you're also asking the wrong question.

This article is for CTOs, software directors, and engineering leads who are ready to move beyond vanity metrics — and who want to measure what really matters. At Deventura, this shift in thinking changed everything for us. Here's how.

The Metric Mirage: Why Lines of Code and Token Counts Mislead

In an era of AI-assisted development, traditional metrics like LoC (lines of code) are not just outdated — they're dangerous.

- More lines doesn't mean more value. An AI can easily generate 200 lines of boilerplate, but that says nothing about quality or purpose.

- Token usage is a poor proxy. Just because someone used Anthropic Claude or GitHub Copilot doesn't mean their contribution was meaningful.

- Developers may game the system. If they're being measured by code volume, don't be surprised when they stop simplifying or refactoring.

Productivity isn't about how much code you write. It's about what gets delivered, how maintainable it is, and whether your team is getting faster, not sloppier.

What Is Real Efficiency Gain, Then?

The answer lies in measuring the right behaviors. At Deventura, we've developed the Six Pillars Framework as the alternative to vanity metrics. Instead of counting lines or tokens, we measure what actually drives performance:

- Complexity tackled — Are developers solving harder problems with AI assistance?

- Delivery consistency — Is output steady and sustainable?

- Review engagement — Are they collaborating more effectively?

- Technical focus — Are they building deeper expertise?

We've observed measurable increases in developer efficiency when teams adopt this approach. Developers using AI tools effectively show faster merge times, tackle higher complexity work, and maintain better team collaboration.

What mattered was the behavioral shift, not the raw output. That's the kind of gain your board actually wants to hear about.

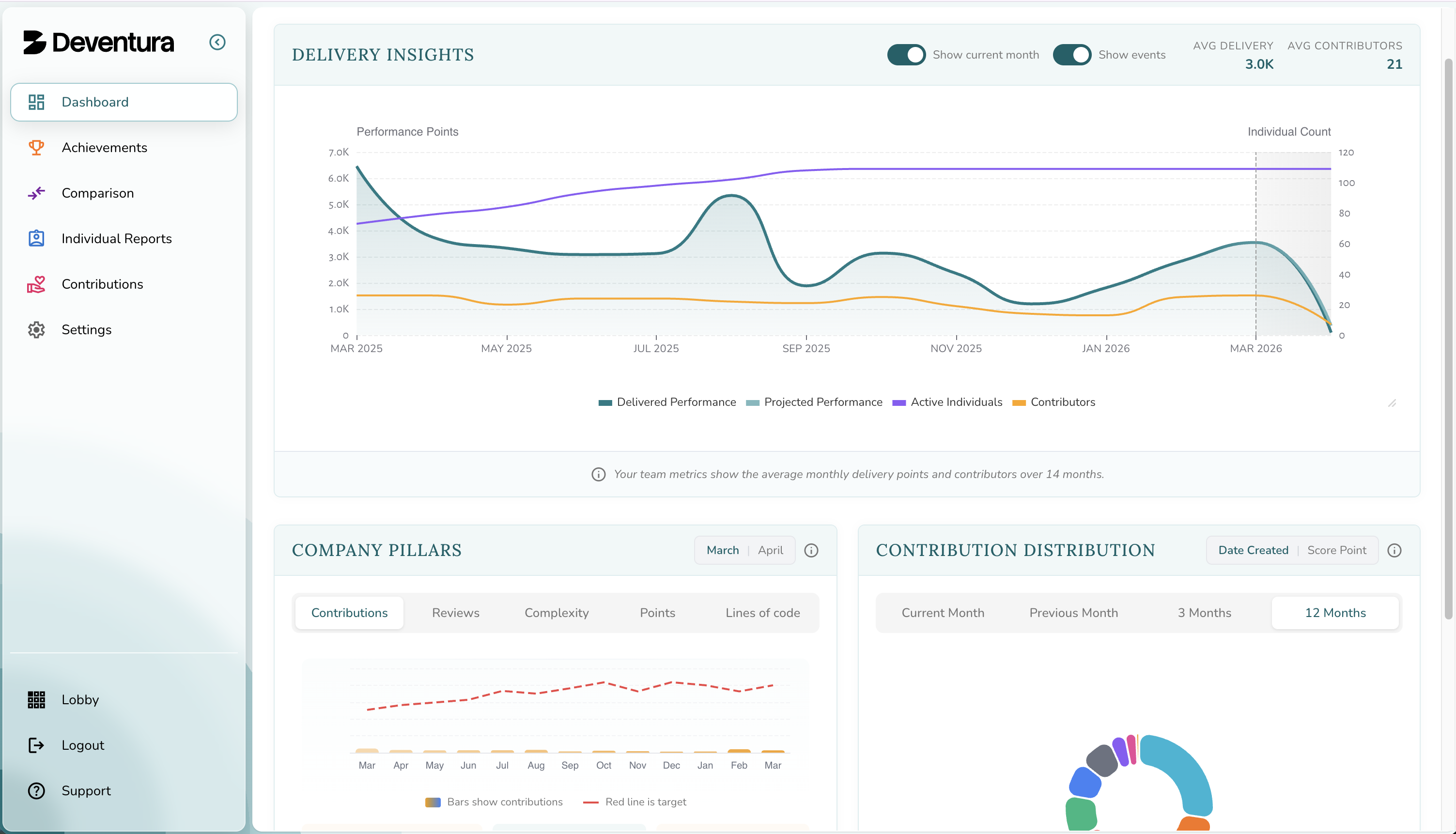

How Deventura Makes This Measurable

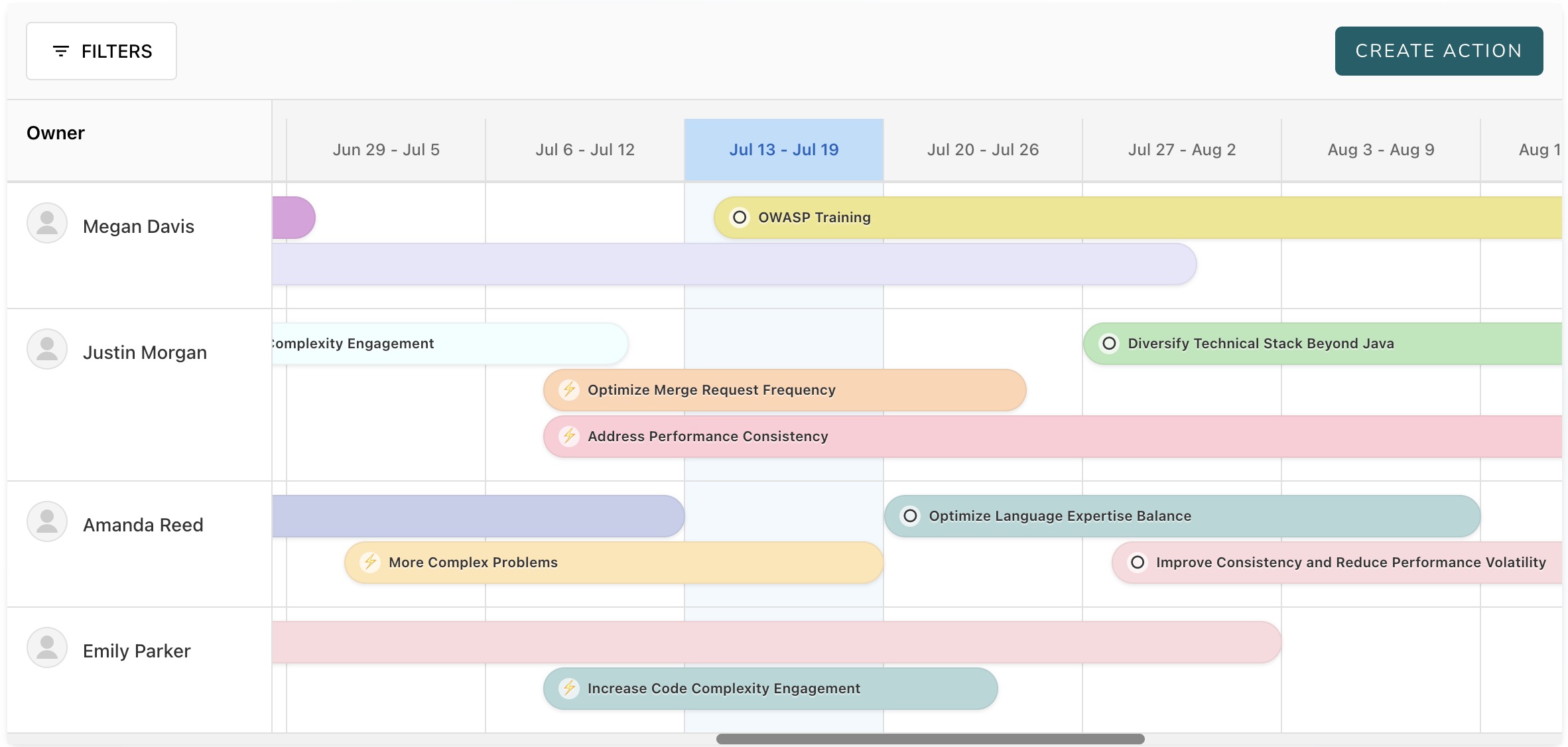

Our Six Pillars Framework provides the structure, but measurement alone isn't enough. That's where our AI Performance Coach transforms data into action.

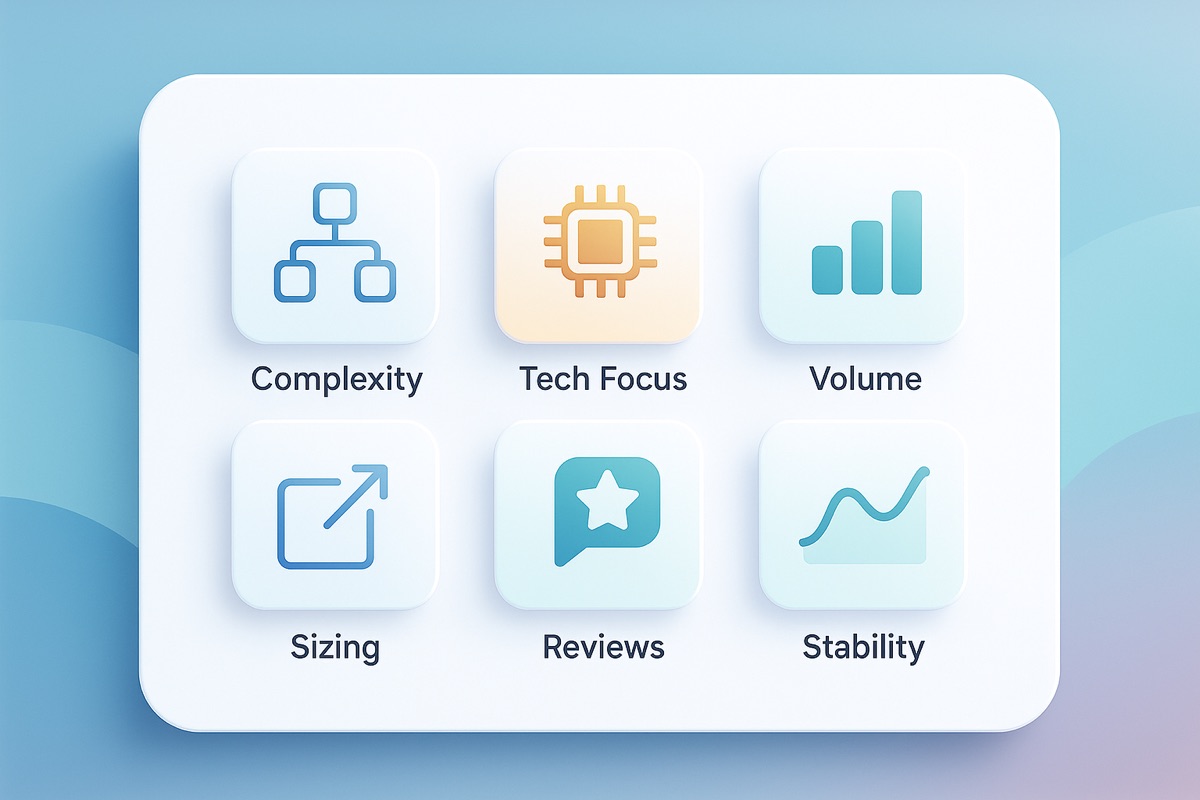

The Six Pillars track:

- Complexity Ratio — Are devs solving hard problems, or avoiding them?

- Tech Focus — Are they building deep expertise in core stacks?

- Merge Request Volume — Are they delivering consistently?

- Size Balance — Are merge requests reviewable and meaningful?

- Review Engagement — Are they lifting the team or working in a silo?

- Trend Stability — Are they improving steadily, or burning out?

Our AI Performance Coach analyzes these patterns and provides personalized recommendations for each developer. Instead of generic advice, managers get specific, actionable coaching suggestions based on actual behavior patterns. This combination of measurement plus guidance is how we've helped teams move from gut-feel leadership to data-backed coaching.

Seeing AI Gains in the Real World

We've seen firsthand how developers using tools like Claude or Copilot outperform those who don't — but only when you measure the right things.

One team using Deventura saw:

- A 45% rise in meaningful code contributions after AI tooling adoption

- Stabilized merge request sizes, reducing review delays by 30%

- Improved team engagement, tracked via review activity

These aren't vanity stats. These are behaviors that correlate with long-term team performance.

Why This Matters to the Boardroom

Boards and company owners are asking the right question — "Is AI making us more efficient?" — but they often get the wrong answers.

Your job as a tech leader is to answer that question with credibility. That means:

- Rejecting token counts and LoC as productivity metrics

- Showing how developer behavior and team health are evolving

- Backing it all up with structured, trustworthy data

Deventura was built to give you exactly that.

If You're Facing These Questions, Let's Talk

If you're struggling to report concrete productivity gains from AI tools — or facing increasing pressure to "prove" efficiency — you're not alone.

We built Deventura for leaders like you.

- Nightly evaluations of merge requests across GitHub and GitLab

- AI-powered scoring across technical and architectural dimensions

- Long-term trend insights across individuals and teams

- A behavior-first framework to build performance, not just track it

Final Thoughts

AI is transforming how developers work — but how we measure that work has to evolve too. Deventura helped us stop counting lines and start spotting patterns. It helped us see which developers were thriving with AI tools, and where we needed to step in. If you want to do the same — we're here.