AI coding tools do not reduce the need for engineering discipline. They expose the lack of it.

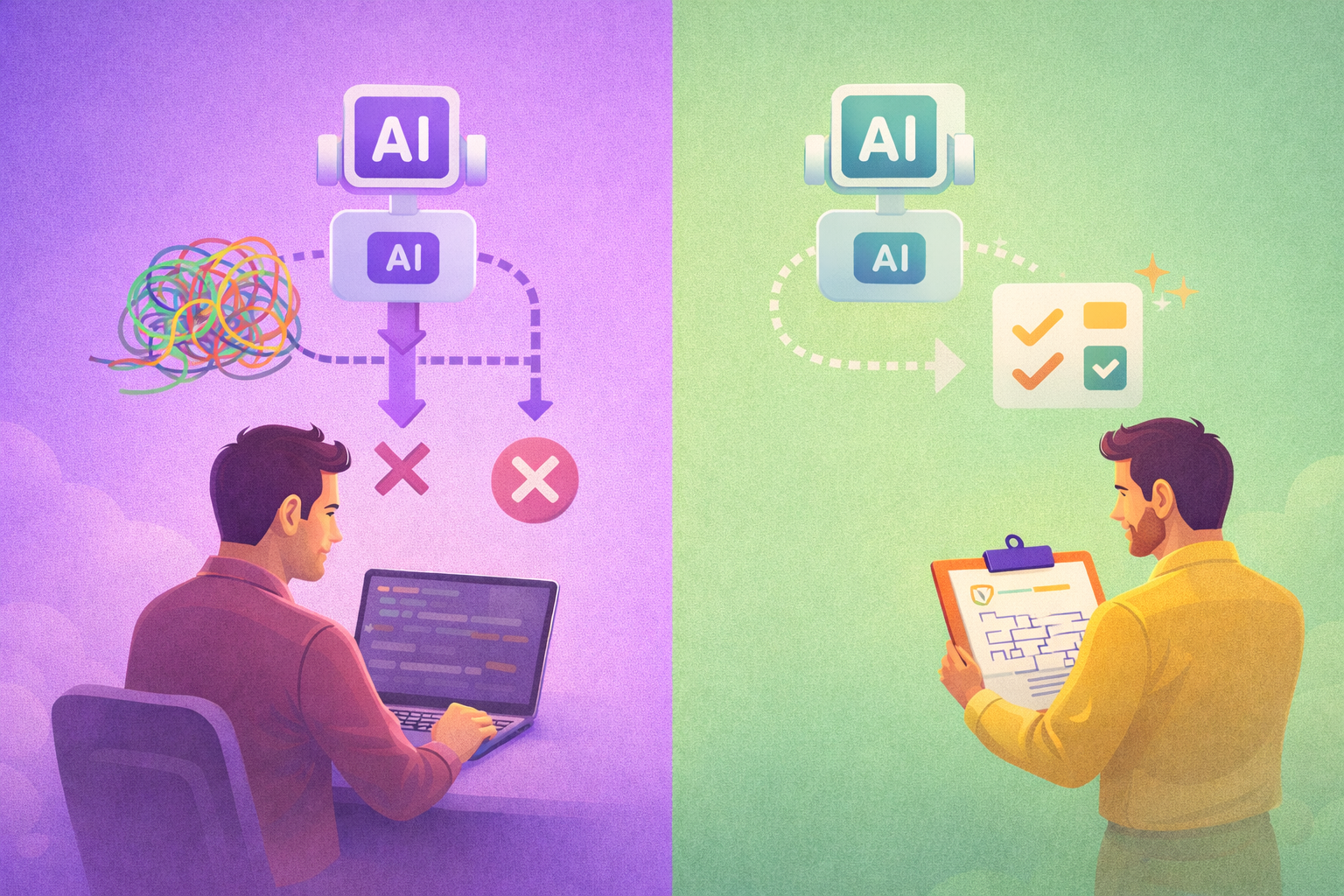

A developer recently shared a detailed account of building a complex project with AI assistance. The first attempt — loosely prompted, lightly reviewed — produced a working prototype in weeks. It was also unmaintainable spaghetti that had to be thrown away entirely.

The second attempt worked. But only because the developer brought tight architectural specs, disciplined prompting, continuous review, and a clear mental model of the system. The AI accelerated execution. The human provided the judgment.

This pattern keeps repeating across teams we talk to. The engineers getting the most from AI are not the ones prompting fastest. They are the ones who understand system design deeply enough to guide the output and catch when it drifts.

The Pattern: Fast Prototype vs. Maintainable System

The story above is not an isolated case. It is the default trajectory for teams adopting AI coding tools without adjusting their engineering practices.

AI makes it trivially easy to produce working code quickly. But "working" and "maintainable" are different standards. A prototype that handles the happy path is not the same as production code that handles edge cases, follows consistent patterns, and can be understood by the next developer who touches it.

The teams that succeed with AI-assisted development are the ones that recognize this gap early and build the discipline to close it: clear specifications before prompting, architectural guardrails during generation, and rigorous review after output.

Code Quality Matters More, Not Less

LLMs perform measurably better in well-structured codebases with clear patterns and strong typing. Spaghetti code degrades AI performance the same way it degrades human performance.

This creates a compounding effect. Teams with clean, well-architected codebases get better AI output, which maintains code quality, which in turn produces even better AI output. Teams with messy codebases get worse AI output, which further degrades quality, creating a downward spiral.

For engineering leaders, this means that investments in code quality — consistent naming conventions, clear module boundaries, comprehensive type definitions, meaningful test coverage — are no longer just about long-term maintainability. They directly improve your team's ability to leverage AI tools effectively today.

The Bottleneck Has Shifted

Raw code output is no longer the constraint. Architecture, review capacity, and the ability to maintain a coherent mental model of your system are.

When a developer can generate hundreds of lines of code in minutes, the limiting factor shifts to:

- Architectural clarity — Can you specify what needs to be built clearly enough for AI to produce coherent output?

- Review throughput — Can your team verify AI-generated code at the rate it is being produced?

- System comprehension — Does someone still hold the mental model of how all these pieces fit together?

- Design coherence — Are the generated components following consistent patterns, or is each one solving the same problem differently?

These are fundamentally human capabilities. They cannot be automated away. And they require deliberate investment from engineering leadership.

Compounding Discipline Into AI Productivity

Teams that invest in engineering rigor — clear design specs, meaningful test coverage, consistent patterns — will compound their AI productivity gains. Teams that skip it will hit a wall fast.

The practical implications for engineering leaders:

- Invest in specifications before code. The time spent on clear design docs and architectural decisions pays off exponentially when AI handles the implementation.

- Build review capacity deliberately. If your team's code output doubles but review capacity stays flat, you are accumulating risk, not delivering faster.

- Measure quality alongside speed. Track defect rates, rework frequency, and codebase health metrics to catch the moment when velocity is outrunning discipline.

- Treat codebase health as infrastructure. Clean code is not a luxury. It is the foundation that determines how much value your team extracts from AI tools.

The question is not whether your team should use AI coding tools. It is whether your engineering practices are strong enough to make that adoption sustainable.