Picture this: You're preparing for annual reviews. Some developers immediately come to mind — always visible in meetings, quick to respond on Slack. Others? Quiet, independent. You pause. Are you about to reward charisma over contribution?

Meanwhile, your team dreads review season. Managers scramble to write meaningful feedback, but most comments end up vague — "needs more confidence" or "good technical skills." Remote and hybrid teams amplify the problem: low visibility, unconscious bias, and outdated metrics.

The result? Performance reviews that frustrate, demotivate — and often do more harm than good.

The True Cost of Broken Reviews

When you base evaluations on intuition, the damage is measurable:

- Gallup estimates large organizations lose $2.4m–$35m annually in manager time on ineffective reviews

- Only 14% of employees feel inspired by their performance reviews

- 95% of managers admit their review process needs improvement

- Harvard Business Review has called annual reviews "archaic" — and one-third of U.S. firms have already scrapped them

But the hidden costs run deeper:

- High performers who don't self-promote get overlooked

- Strong relationships get mistaken for strong results

- Top talent leaves when they don't feel seen or valued

- Equity suffers as confidence is mistaken for competence

Subjectivity Isn't Just Flawed — It's Dangerous

Traditional reviews rely on perceptions — not performance data. Without data, most managers fall back on what they can see. Who speaks up. Who seems "busy." Who they personally like.

But visibility isn't performance. And affinity isn't impact.

Common pitfalls include:

- Annual or biannual cadence means feedback is stale, not actionable

- One-sided viewpoints: managers often miss out-through-the-year contributions from quiet, remote team members

- Bias creeps in: favoritism, recency effects, proximity bias — and gender or personality biases — distort outcomes

- 360-degree reviews promise objectivity but often amplify bias, as those with louder voices dominate the process

Harvard Business Review notes that in subjective evaluations, confidence is often mistaken for competence — especially across gender, culture, or personality differences. That's a serious equity issue. When reviews are driven by personality instead of proof, even well-meaning managers reinforce bias.

The Path Forward: Data-Driven Objectivity

The good news? There's a better way.

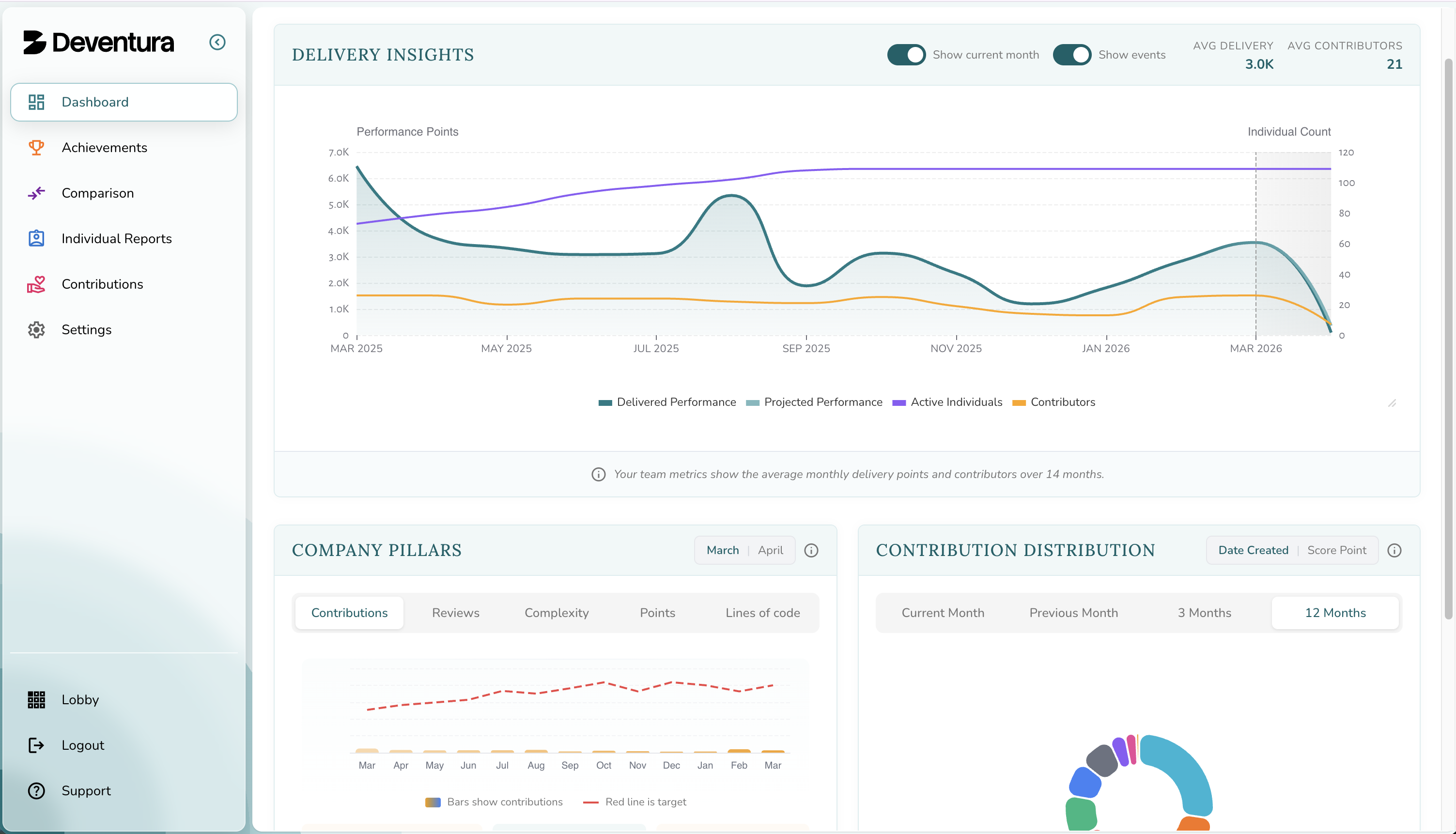

By anchoring reviews in behavioral data — such as merge request complexity, review engagement, and delivery consistency — you move from opinions to observable patterns. You don't have to guess who's growing, contributing, or stagnating. You can see it.

Studies show feedback apps that capture peer insights and productivity trends outperform static reviews. They reduce bias, surface hidden contributors, and create a stronger link between effort, value, and recognition.

In hybrid teams, this becomes critical: trust grows when data, not proximity, defines performance.

A Framework for Fair Evaluation: The Six Pillars

At Deventura, we believe in measuring what matters. That's why we built the Six Pillars of Developer Performance — a framework that captures not just how much someone ships, but how they contribute to team health and long-term outcomes.

The Six Pillars measure:

- Complexity Ratio — Tackling hard problems vs. easy wins

- Tech Focus — Building depth, not bouncing between stacks

- Merge Request Volume — Consistent, sustainable output

- Size Balance — Right-sized, reviewable contributions

- Review Engagement — Sharing knowledge and lifting others

- Trend Stability — Healthy consistency over time

This is how you separate confidence from competence. Visibility from value. Noise from performance.

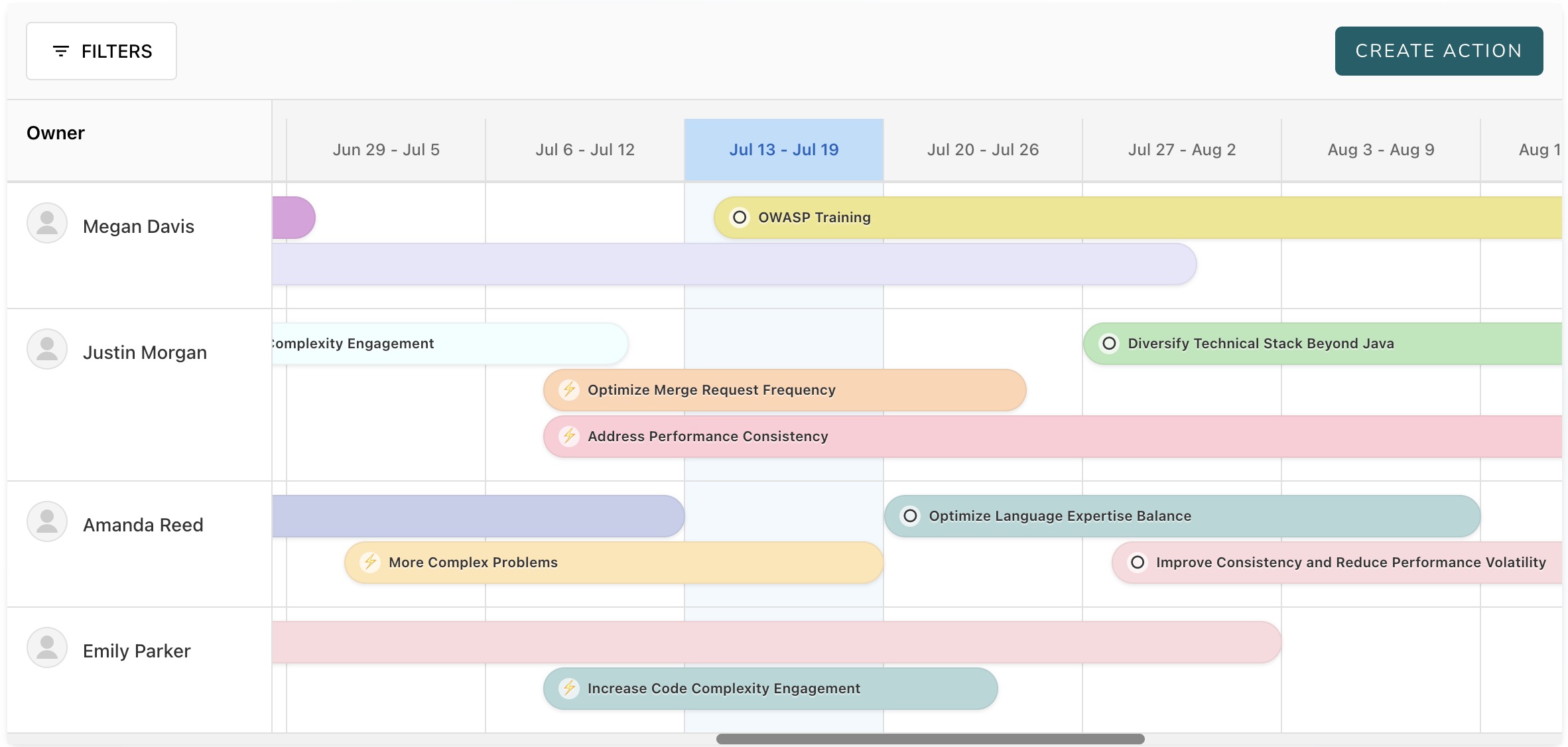

From Measurement to Meaningful Coaching

The point isn't just to measure people — it's to build people.

Use Six Pillars insights to:

- Start growth-oriented 1:1 conversations, anchored in observable trends

- Align career development plans with actual behavior

- Build a culture of transparency, guidance, and continuous improvement

- Create psychological safety where everyone knows what's expected — and what's being measured

Our AI Performance Coach transforms these metrics into conversation starters. Instead of managers struggling to interpret data, they receive specific talking points and coaching suggestions tailored to each developer's patterns. This makes performance conversations more productive and less stressful for everyone involved.

Objective frameworks level the playing field. Everyone knows what's expected — and what's being measured. And that builds psychological safety.

Real-Time Feedback Replaces Annual Guesswork

What's the alternative to annual reviews? Real-time, objective feedback grounded in behavioral data.

Continuous feedback systems:

- Capture contributions as they happen, not months later

- Surface patterns and trends before they become problems

- Enable course correction in real-time

- Build trust through transparency and consistency

It's Time to Stop Playing Performance Roulette

If your performance reviews feel vague, political, or disconnected from actual work — it's not your fault. Most systems weren't designed for how we work today.

But it is your opportunity to lead differently.

At Deventura, we help engineering leaders replace traditional review cycles with ongoing behavioral metrics and real-time feedback loops — using metrics that reflect real behavior, not just visibility.

By embracing continuous, data-driven metrics and pairing them with meaningful coaching, you can transform reviews from guesswork into growth engines.