The Linux kernel just drew a clear line on AI-assisted code — and every engineering leader should take note.

Their new policy is straightforward: Use whatever AI tools you want, but you sign your name on every line. You own the bugs, the licensing, and the regressions. No "the model did it" excuses.

What They Got Right: No Bans, No Bureaucracy

What's smart is what they didn't do. They didn't ban AI. They didn't add bureaucracy or extra approval layers. They simply reinforced the core principle of good engineering: the human is accountable.

This is a leadership decision worth studying. Rather than reacting with fear or excessive process, the kernel maintainers asked a simple question: what is the minimum policy that preserves quality and accountability without slowing down contributors who are using AI tools responsibly?

The answer was elegant: ownership. If you submit it, you own it. The tool is irrelevant. The output is yours.

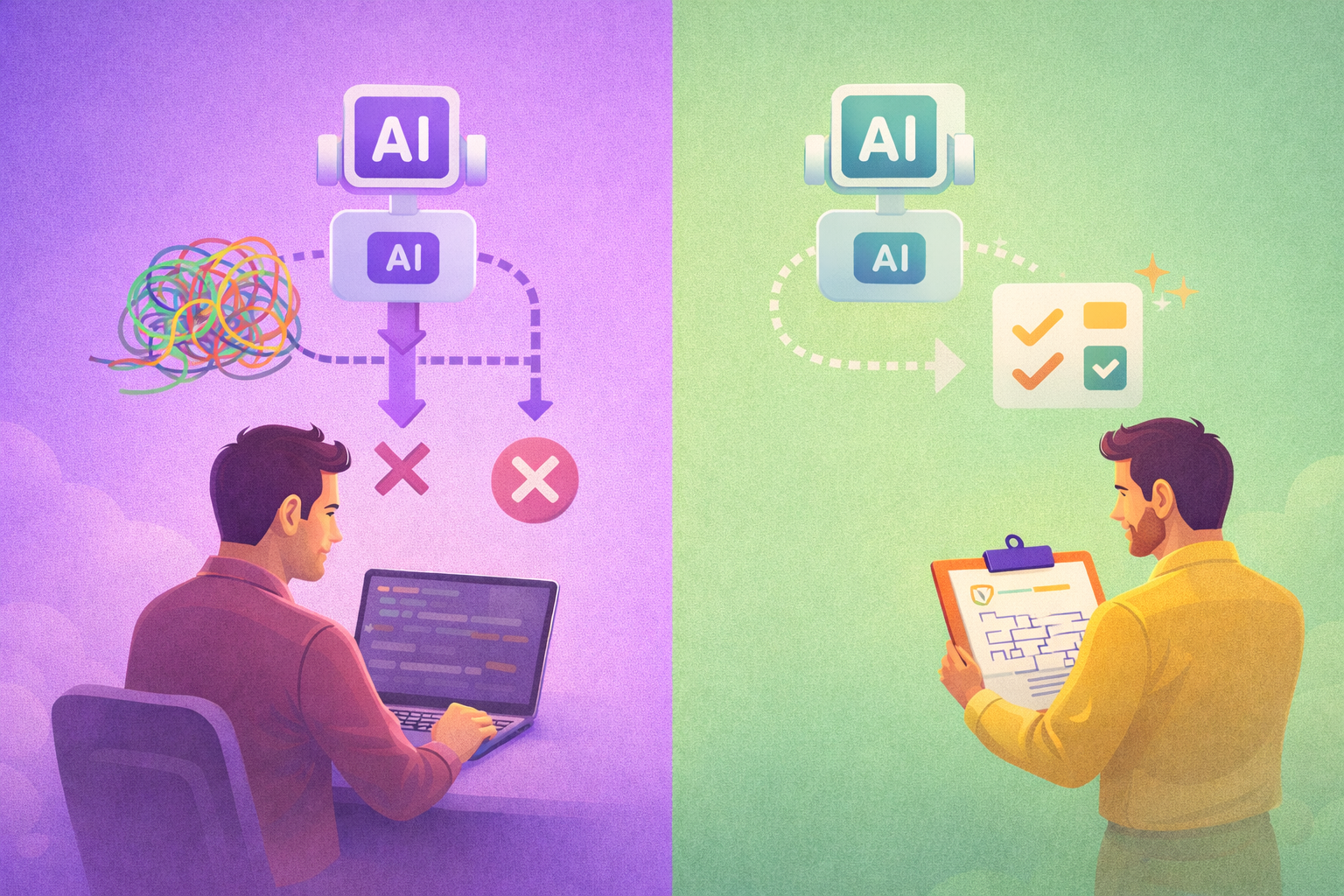

For engineering leaders considering their own AI policies, this is a strong template. It avoids the two failure modes most organizations fall into — either banning AI tools entirely (losing productivity) or allowing them without any accountability framework (accumulating risk).

The Part That Should Make Leaders Pause

This policy neatly solves the liability question. But it doesn't solve the review bandwidth problem.

When contributors can generate patches faster than maintainers can review them, you don't have a policy issue — you have a throughput issue. And throughput issues compound. Backlogs grow, review quality drops under pressure, and the very accountability the policy establishes starts to erode in practice even as it holds on paper.

The Linux kernel can absorb this because it has decades of review culture, a deep bench of experienced maintainers, and a subsystem structure that distributes load. Most engineering organizations have none of these advantages.

The Real Challenge: Managing Accelerated Velocity

The real challenge going forward isn't whether to allow AI-assisted code. It's building review processes, quality gates, and metrics that can handle the new speed without burning out your senior engineers.

Concretely, this means:

- Scaling review capacity alongside code output. If your team's generation speed doubles but review bandwidth stays flat, you are building a backlog, not shipping faster.

- Investing in automated quality gates. Static analysis, test coverage requirements, and architectural linting become force multipliers when code volume increases.

- Tracking reviewer load as a first-class metric. Burnout among senior engineers is the hidden cost of unmanaged AI acceleration.

- Building review culture deliberately. The skills that make someone a good reviewer — system thinking, pattern recognition, edge case awareness — need explicit development time.

Accountability Without Throughput Is Just Liability

A policy that says "you own what you submit" is necessary but not sufficient. If the organizational systems around that policy can't keep pace with the volume of submissions, accountability becomes theoretical.

Teams that figure out how to manage this accelerated velocity — not just enable it — will pull ahead. The policy question is settled. The operational question is where the real work begins.