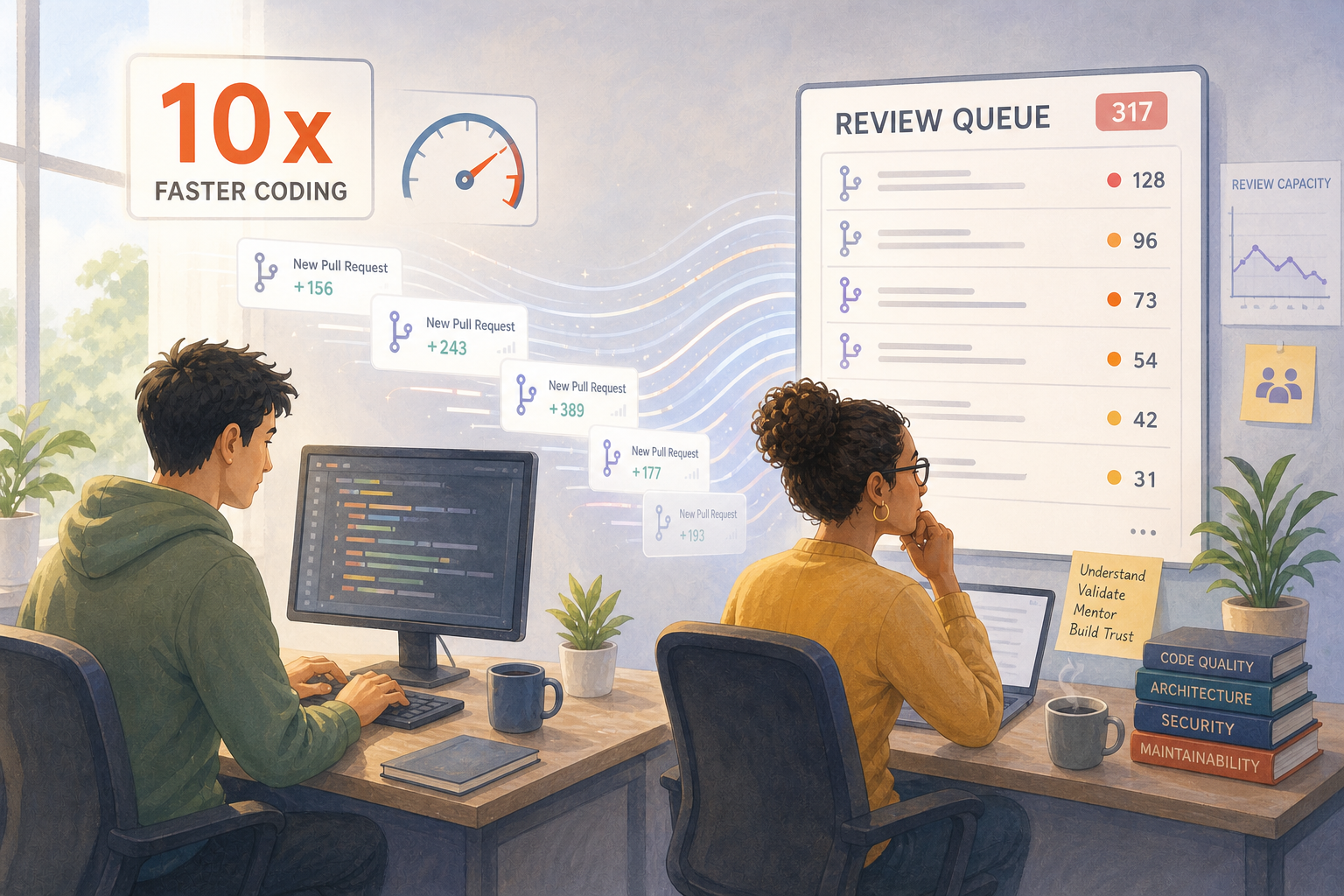

If AI makes coding 10x faster, why are review queues getting longer, not shorter?

Zig's Striking Rationale

The Zig project just published a striking rationale for banning LLM-generated contributions. Their core argument isn't anti-AI ideology. It's something more interesting: "We are not bottlenecked by coding."

Read that again. One of the most ambitious systems projects in the world is telling us that typing speed was never the constraint. The constraint is review capacity, contributor trust, and the slow accumulation of judgment that turns a newcomer into a maintainer.

This Flips the Productivity Narrative

When we measure engineering performance purely by output velocity, we miss what actually creates durable software: the human investment in reviewing, mentoring, and growing the people who write the code.

A flood of AI-generated PRs doesn't accelerate a project if every one of them shifts cognitive load onto an already-stretched maintainer. The output side speeds up; the integration side gets buried. Net throughput goes down, not up — and the people doing the reviewing burn out faster than you can replace them.

Most "AI is making us faster" stories implicitly assume the reviewing capacity is infinite. It isn't.

The Metrics That Matter Are Changing

Cycle time still counts. Deployment frequency still counts. But the leading indicators of healthy engineering organizations now include things like:

- Review throughput — not just how fast code is written, but how fast it gets meaningfully evaluated.

- Contributor retention — whether the engineers doing the cognitive heavy lifting are sticking around or quietly looking for the exit.

- The ratio of code that gets meaningfully understood versus merely merged — because merged is not the same as integrated.

- Maintainer load — how concentrated the review burden is, and whether the team is creating new senior engineers or just exhausting the existing ones.

None of these are new ideas. They were always part of healthy engineering. What is new is that AI has made them much easier to ignore — and much more dangerous to ignore.

Two Sides of the Same Equation

AI raises the floor on what individuals can produce. It also raises the ceiling on what teams must review, validate, and integrate. The leaders who win the next decade will be the ones who measure both sides of that equation.

That means recognizing that "speed" and "capacity" are different problems. Speed is about how fast a single contributor can move. Capacity is about how much the system as a whole can absorb. Optimizing one without the other creates exactly the dynamic Zig is reacting to: more output than the project can responsibly accept.

Where Is Your Real Bottleneck?

Zig's answer for their project was a blanket policy, which makes sense given their structure. Most commercial engineering teams have more nuanced choices: where to deploy AI heavily, where to invest in review and mentoring infrastructure, where to deliberately slow the output side down so the integration side can keep up.

The question to ask is not "how much faster can we get?" It is: where is our team's real bottleneck right now — writing code, or understanding it?

If the honest answer is the second one, then more AI output will not help. Better measurement and better investment in the human side of engineering will.