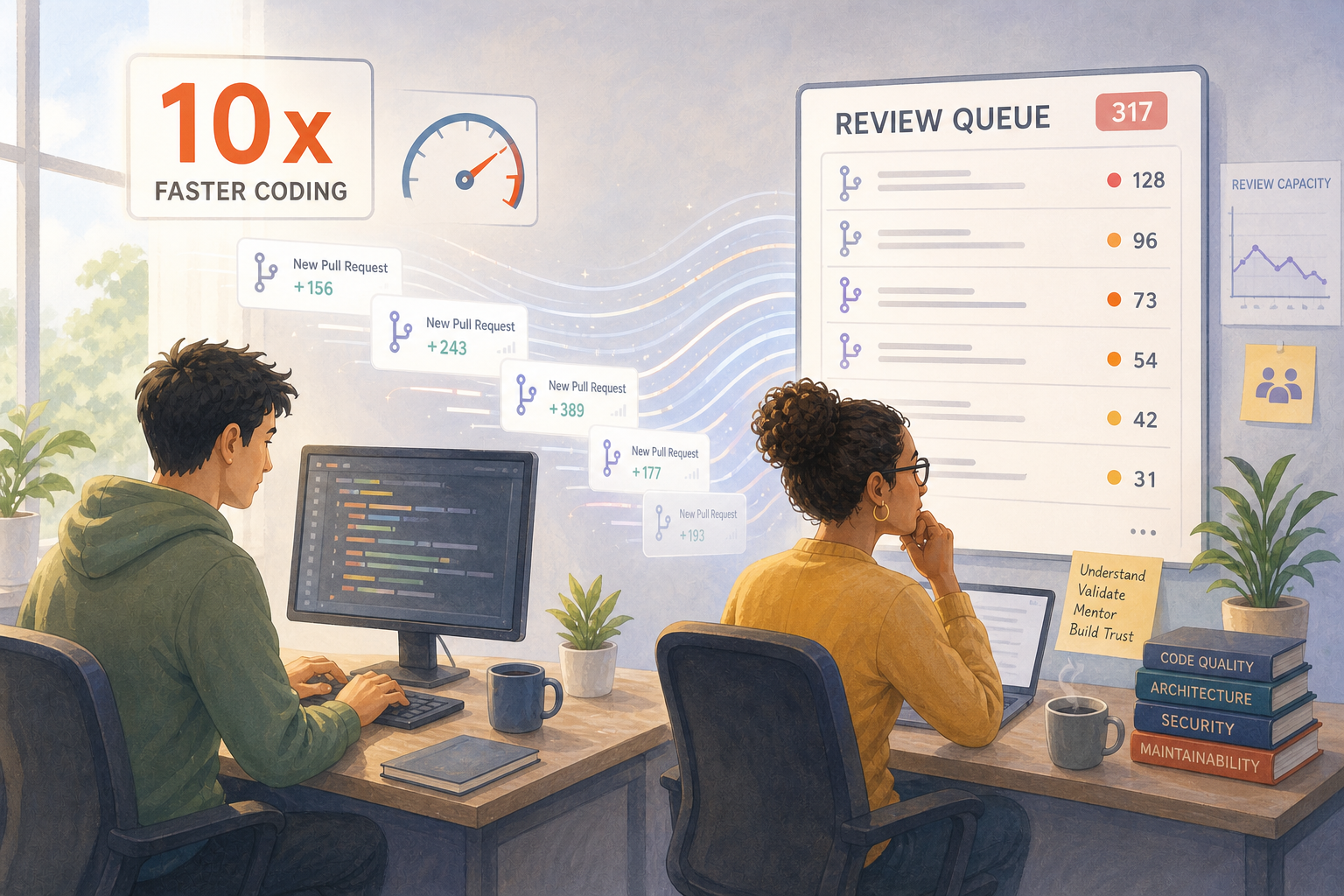

If your team's code output just doubled, has your ability to evaluate that code doubled too?

The Conversation Engineering Leaders Are Having Right Now

There's a growing conversation in engineering circles about the gap between AI-accelerated code production and the human systems built to review, validate, and maintain it.

The uncomfortable truth: the entire software development lifecycle was designed around the assumption that a developer produces a few hundred lines of code per day. That assumption is breaking.

Our take: AI didn't create undisciplined engineering. It exposed and amplified whatever discipline — or lack of it — was already there. Strong teams are getting dramatically faster. Weak teams are accumulating invisible risk at unprecedented speed.

A Concrete Example

A team of 6 engineers used to ship around 20 PRs per week. After adopting AI coding tools, that jumps to 60 PRs.

On paper, everything looks great:

- Output is up 3x

- Cycle time per PR appears lower

- Leadership sees momentum

But under the surface:

- Review time per PR drops from 25 minutes to 8 minutes

- Senior engineers start skimming instead of reviewing deeply

- Architectural inconsistencies start creeping in across services

- Two weeks later, production defects increase by 40%

- A critical bug slips through, triggering a 2-day rollback and hotfix cycle

Nothing broke immediately. The system just couldn't keep up with the new volume.

The Bottleneck Didn't Disappear — It Moved

The team didn't suddenly become worse. Their review and validation capacity stayed flat while code production tripled.

The bottleneck didn't disappear. It moved from writing code to understanding it.

This is the pattern playing out across organizations right now. The metrics most leaders watch — output, cycle time, throughput — tell a story of acceleration. The metrics they don't watch — review depth, rework rate, post-merge defects — tell a different story underneath.

Traditional Output Proxies Are Now Misleading

The leaders we talk to are realizing that traditional output proxies — lines of code, PR count, story points — were already imperfect. Now they're actively misleading.

When the cost of producing code drops dramatically, the signal value of "we produced more code" drops with it. What was a rough proxy for productive work becomes a near-meaningless number that can be inflated by tooling alone.

What Actually Matters Now

What matters more than ever:

- Cycle time from idea to validated production change. Not how fast code gets opened as a PR, but how fast a verified improvement reaches users.

- Review quality and depth, not just review throughput. Reviews that catch real problems — not reviews that close fast.

- Rework rate and post-merge defect signals. How often does "shipped" code come back as a bug or a refactor?

- Where bottlenecks have shifted. Hint: it's rarely the keyboard anymore.

The Teams That Thrive

The teams that thrive in this shift aren't the ones generating the most code. They're the ones who know which signals to trust when the volume goes up and the visibility goes down.

That is the leadership skill of this moment: not just adopting AI, but rebuilding the measurement layer around it so you can actually tell what is working — and what is quietly accumulating risk you'll see in next quarter's incident report.

What's the metric your team relies on most to know whether AI-assisted work is actually moving you forward — or just moving faster?